Workers think they're training their replacements, and for once, the anxiety has a point.

The Summary

- 30% of Americans now believe AI will make their jobs obsolete, and college students are switching majors in response to perceived AI risk

- Companies are deploying "digital employees" and openly discussing AI-driven headcount reduction while claiming they're just freeing humans for "higher-value work"

- The paradox: every task you teach an AI improves the model that might not need you tomorrow

The Signal

The fear isn't irrational. BNY brought on digital employees for "mundane work" with the official line that it frees up staff for other tasks. That's the script every company reads from before a restructuring. You don't invest billions in efficiency technology because you want the same headcount doing fuzzier work. You invest because you want different math on the P&L.

But here's where the worker anxiety meets a harder reality. Most jobs aren't just task sequences you can record and replay. They're judgment calls under ambiguity, relationship management, context-switching across domains the AI has never seen. Workplace analysts like JP Gownder at Forrester say we're "not close" to simple one-for-one replacement in almost any sphere. The job won't vanish. It will morph.

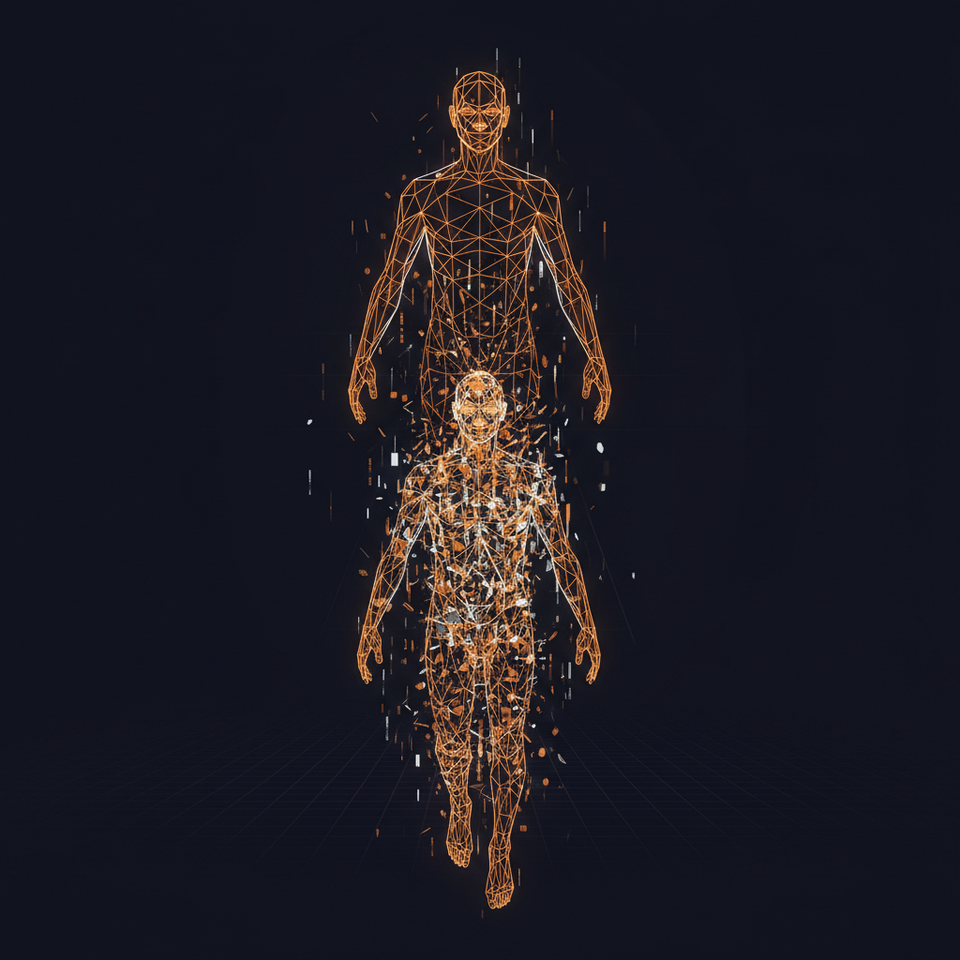

What's actually happening is role compression. Three people who used AI to 3x their output become two people. Then one. Not because the AI learned to do everything they did, but because the parts that required a human got smaller and the parts that didn't got automated. The worker using the AI fastest doesn't train their replacement. They become the last human standing when the music stops.

This explains why career educators like Erin McGoff keep hearing the same fear. Workers see the future clearly: their jobs will change, some will disappear, and the people who can't figure out which parts of their role are human-native will be the ones who leave first.

The Implication

Stop thinking about whether AI will take your job. Start mapping which parts of your role require human judgment, relationship capital, or cross-domain synthesis that no agent can replicate yet. Those are your moats. Everything else is surface area for automation. The goal isn't to avoid using AI, it's to use it so well you become the operator nobody can replace, the human the agents work for instead of around.

Source: Business Insider Tech